++++

++++Notebook converted from Jupyter for blog publishing.

03-SVM-Project-Exercise-Solutions

Support Vector Machines

Exercise - Solutions

Fraud in Wine

Wine fraud relates to the commercial aspects of wine. The most prevalent type of fraud is one where wines are adulterated, usually with the addition of cheaper products (e.g. juices) and sometimes with harmful chemicals and sweeteners (compensating for color or flavor).

Counterfeiting and the relabelling of inferior and cheaper wines to more expensive brands is another common type of wine fraud.

Project Goals

A distribution company that was recently a victim of fraud has completed an audit of various samples of wine through the use of chemical analysis on samples. The distribution company specializes in exporting extremely high quality, expensive wines, but was defrauded by a supplier who was attempting to pass off cheap, low quality wine as higher grade wine. The distribution company has hired you to attempt to create a machine learning model that can help detect low quality (a.k.a "fraud") wine samples. They want to know if it is even possible to detect such a difference.

Data Source: P. Cortez, A. Cerdeira, F. Almeida, T. Matos and J. Reis. Modeling wine preferences by data mining from physicochemical properties. In Decision Support Systems, Elsevier, 47(4):547-553, 2009.

TASK: Your overall goal is to use the wine dataset shown below to develop a machine learning model that attempts to predict if a wine is "Legit" or "Fraud" based on various chemical features. Complete the tasks below to follow along with the project.

Complete the Tasks in bold

TASK: Run the cells below to import the libraries and load the dataset.

import numpy as np

import pandas as pd

import seaborn as sns

import matplotlib.pyplot as pltdf = pd.read_csv("../DATA/wine_fraud.csv")df.head()fixed acidity

volatile acidity

citric acid

residual sugar

chloridesTASK: What are the unique variables in the target column we are trying to predict (quality)?

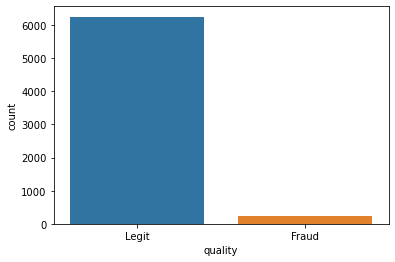

df['quality'].unique()array(['Legit', 'Fraud'], dtype=object)TASK: Create a countplot that displays the count per category of Legit vs Fraud. Is the label/target balanced or unbalanced?

sns.countplot(x='quality',data=df)<AxesSubplot:xlabel='quality', ylabel='count'>

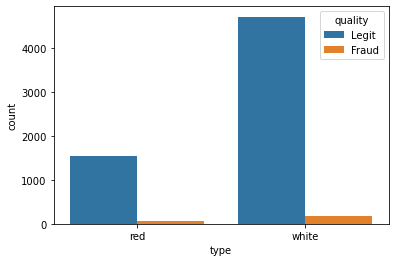

TASK: Let's find out if there is a difference between red and white wine when it comes to fraud. Create a countplot that has the wine type on the x axis with the hue separating columns by Fraud vs Legit.

sns.countplot(x='type',hue='quality',data=df)<AxesSubplot:xlabel='type', ylabel='count'>

TASK: What percentage of red wines are Fraud? What percentage of white wines are fraud?

reds = df[df["type"]=='red']whites = df[df["type"]=='white']print("Percentage of fraud in Red Wines:")

print(100* (len(reds[reds['quality']=='Fraud'])/len(reds)))Percentage of fraud in Red Wines:

3.9399624765478425print("Percentage of fraud in White Wines:")

print(100* (len(whites[whites['quality']=='Fraud'])/len(whites)))Percentage of fraud in White Wines:

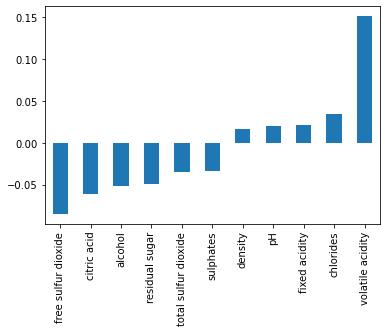

3.7362188648427925TASK: Calculate the correlation between the various features and the "quality" column. To do this you may need to map the column to 0 and 1 instead of a string.

df['Fraud']= df['quality'].map({'Legit':0,'Fraud':1})df.corr()['Fraud']fixed acidity 0.021794

volatile acidity 0.151228

citric acid -0.061789

residual sugar -0.048756

chlorides 0.034499TASK: Create a bar plot of the correlation values to Fraudlent wine.

# CODE HEREdf.corr()['Fraud'][:-1].sort_values().plot(kind='bar')<AxesSubplot:>

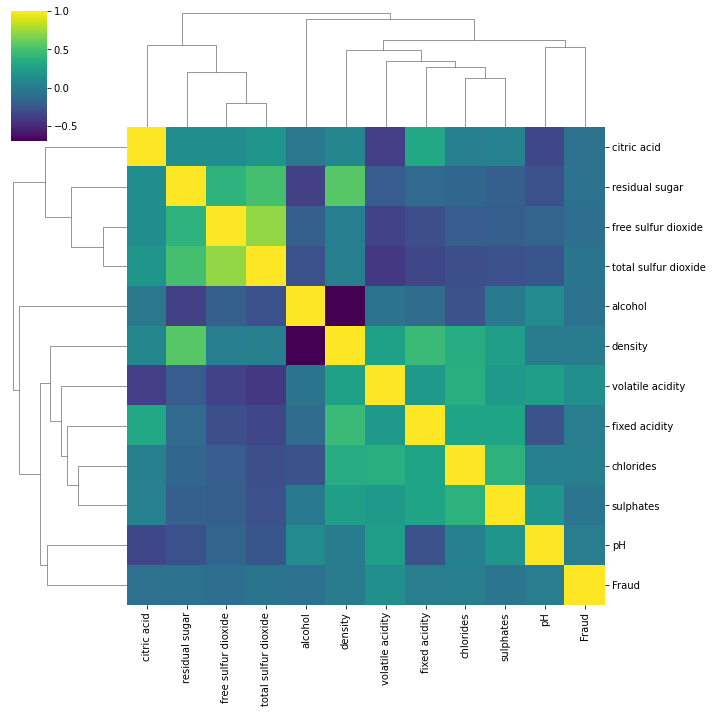

TASK: Create a clustermap with seaborn to explore the relationships between variables.

sns.clustermap(df.corr(),cmap='viridis')<seaborn.matrix.ClusterGrid at 0x231b34be088>

Machine Learning Model

TASK: Convert the categorical column "type" from a string or "red" or "white" to dummy variables:

# CODE HEREdf['type'] = pd.get_dummies(df['type'],drop_first=True)df = df.drop('Fraud',axis=1)TASK: Separate out the data into X features and y target label ("quality" column)

X = df.drop('quality',axis=1)

y = df['quality']TASK: Perform a Train|Test split on the data, with a 10% test size. Note: The solution uses a random state of 101

from sklearn.model_selection import train_test_splitX_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.1, random_state=101)TASK: Scale the X train and X test data.

from sklearn.preprocessing import StandardScalerscaler = StandardScaler()scaled_X_train = scaler.fit_transform(X_train)

scaled_X_test = scaler.transform(X_test)TASK: Create an instance of a Support Vector Machine classifier. Previously we have left this model "blank", (e.g. with no parameters). However, we already know that the classes are unbalanced, in an attempt to help alleviate this issue, we can automatically adjust weights inversely proportional to class frequencies in the input data with a argument call in the SVC() call. Check out the [documentation for SVC] online and look up what the argument\parameter is.

# CODE HEREfrom sklearn.svm import SVCsvc = SVC(class_weight='balanced')TASK: Use a GridSearchCV to run a grid search for the best C and gamma parameters.

# CODE HEREfrom sklearn.model_selection import GridSearchCVparam_grid = {'C':[0.001,0.01,0.1,0.5,1],'gamma':['scale','auto']}

grid = GridSearchCV(svc,param_grid)grid.fit(scaled_X_train,y_train)GridSearchCV(estimator=SVC(class_weight='balanced'),

param_grid={'C': [0.001, 0.01, 0.1, 0.5, 1],

'gamma': ['scale', 'auto']})grid.best_params_{'C': 1, 'gamma': 'auto'}TASK: Display the confusion matrix and classification report for your model.

from sklearn.metrics import confusion_matrix,classification_reportgrid_pred = grid.predict(scaled_X_test)confusion_matrix(y_test,grid_pred)array([[ 17, 10],

[ 92, 531]], dtype=int64)print(classification_report(y_test,grid_pred)) precision recall f1-score support

Fraud 0.16 0.63 0.25 27

Legit 0.98 0.85 0.91 623

TASK: Finally, think about how well this model performed, would you suggest using it? Realistically will this work?

# View video for full discussion on this.