++++

++++Boost model accuracy on commonsense reasoning tasks by instructing the LLM to generate relevant knowledge before making a final prediction.

Generated Knowledge Prompting 📚

This content is adapted from Prompting Guide: Generated Knowledge. It has been curated and organized for educational purposes on this portfolio. No copyright infringement is intended.

Introduction

Incorporating external context is a powerful way to improve LLM accuracy. But what if the model itself could be used to generate that context?

Generated Knowledge Prompting, introduced by Liu et al. (2022) (opens in a new tab), explores using the LLM to generate potentially useful information about a topic before attempting to answer a question. This technique is particularly effective for tasks requiring broad commonsense reasoning.

The Knowledge Gap

Standard zero-shot prompts often fail when a task requires specific real-world knowledge that isn't immediately obvious in the prompt text.

Prompt:

"Part of golf is trying to get a higher point total than others. Yes or No?"

Output: Yes. (Incorrect)

This mistake highlights a limitation: the model has the "knowledge" of golf scoring deep in its weights, but it needs to be explicitly "surfaced" to influence the prediction correctly.

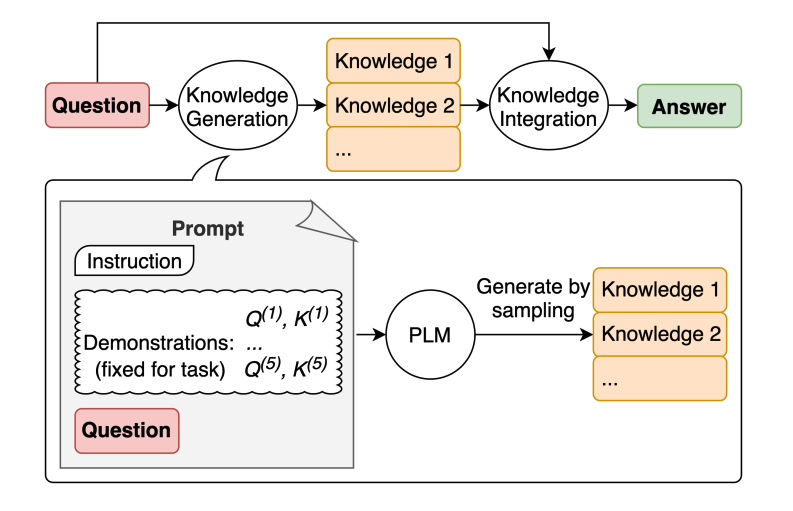

The Two-Step Process

1. Generating Knowledge

First, we use a few-shot prompt to "bootstrap" the model into generating relevant facts about the input.

Prompt:

"Input: Part of golf is trying to get a higher point total than others.

Knowledge:"

Output:

"The objective of golf is to play a set of holes in the least number of strokes. A round of golf typically consists of 18 holes... The player with the lowest score wins the game."

2. Knowledge Integration

Next, we feed that generated knowledge back into the prompt alongside the original question.

Prompt:

"Question: Part of golf is trying to get a higher point total than others. Yes or No?

Knowledge: [Generated text above...]

Explain and Answer:"

Output:

"No, the objective of golf is not to get a higher point total than others. Rather, the objective is to play a set of holes in the least number of strokes... The player with the lowest score wins."

Why it Works

Image Source: Liu et al. (2022)

Image Source: Liu et al. (2022)

By forcing the model to articulate the rules or facts first, you are effectively performing a "knowledge-retention" step. This "surfaced" knowledge then acts as a strong anchor, preventing the model from making shallow pattern-matching errors (like assuming "higher score = better" as in most other sports).

Next Level: While generated knowledge works well for internal model info, for real-world live data, we use Retrieval Augmented Generation (RAG) to pull facts from external databases.