++++

++++Explore an advanced, structure-oriented technique that prioritizes syntax and abstract patterns over specific content to improve LLM reasoning.

Meta Prompting 🧩

This content is adapted from Prompting Guide: Meta Prompting. It has been curated and organized for educational purposes on this portfolio. No copyright infringement is intended.

Introduction

Meta Prompting is an advanced technique that focuses on the structural and syntactical aspects of tasks rather than their specific content details. The goal is to construct a more abstract, structured way of interacting with language models, emphasizing the form and pattern of information over traditional content-centric methods.

Key Characteristics

According to Zhang et al. (2024) (opens in a new tab), meta prompting is defined by several unique properties:

- Structure-oriented: Prioritizes the format and pattern of problems and solutions.

- Syntax-focused: Uses syntax as a guiding template for the expected response.

- Abstract examples: Employs framework-level examples that illustrate the structure without focusing on specific details.

- Categorical approach: Draws from type theory to emphasize logical arrangement.

Meta Prompting vs. Few-Shot

While few-shot prompting is content-driven (providing specific examples), meta prompting focuses on the underlying structure.

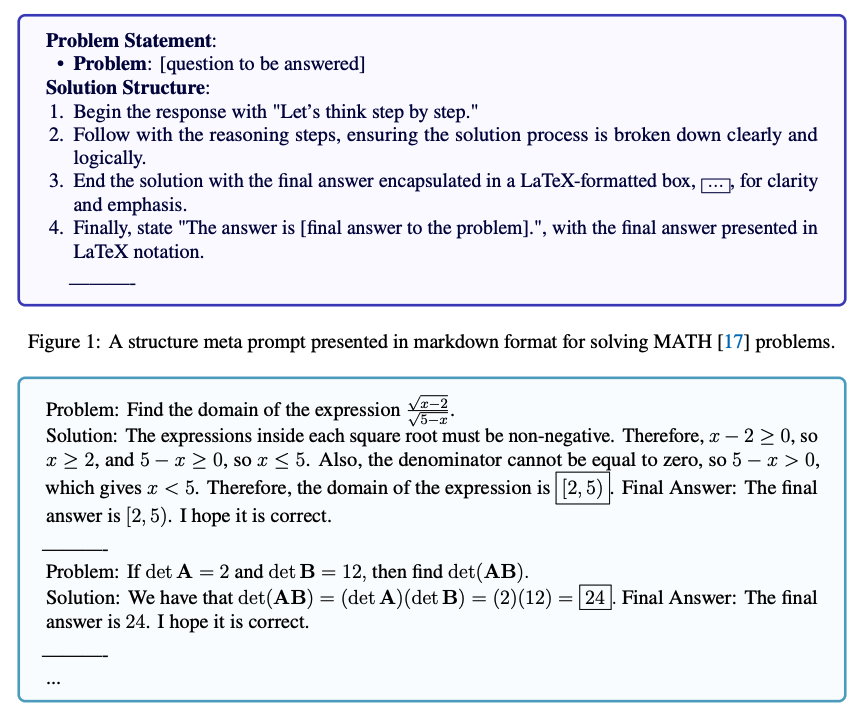

Image Source: Zhang et al. (2024)

Image Source: Zhang et al. (2024)

Advantages of the Meta Approach:

- Token Efficiency: Reduces the number of tokens required by focusing on structure rather than detailed content.

- Fair Comparison: Minimizes the influence of specific examples, providing a better baseline for comparing different models.

- Zero-Shot Efficacy: Can be viewed as a high-level form of zero-shot prompting where the model relies on its innate knowledge of structural logic.

Applications

Meta prompting is particularly beneficial for tasks where the structural pattern of problem-solving is more important than the specific data points:

- Mathematical Problem-Solving: Focuses on the logic of the proof rather than the specific numbers.

- Coding Challenges: Emphasizes algorithmic patterns and syntax structures.

- Theoretical Queries: Directs the model to follow specific logical frameworks or taxonomies.

Limitations: Meta prompting assumes the LLM has innate knowledge about the task structure. Performance may deteriorate for highly unique or novel tasks where the model cannot generalize the abstract pattern.

In the next section, we will explore Self-Consistency, a technique used to improve the reliability of these reasoning chains.