++++

++++Optimize your LLM performance by dynamically selecting the most effective task-specific exemplars through uncertainty-based human annotation.

Active-Prompt ⚡

This content is adapted from Prompting Guide: Active-Prompt. It has been curated and organized for educational purposes on this portfolio. No copyright infringement is intended.

Introduction

Standard Chain-of-Thought (CoT) methods typically rely on a fixed set of human-annotated exemplars. However, these fixed examples may not be the most effective for every specific task.

Active-Prompt, proposed by Diao et al. (2023) (opens in a new tab), addresses this by dynamically adapting the LLM to task-specific prompts using a clever uncertainty-based selection process.

How it Works

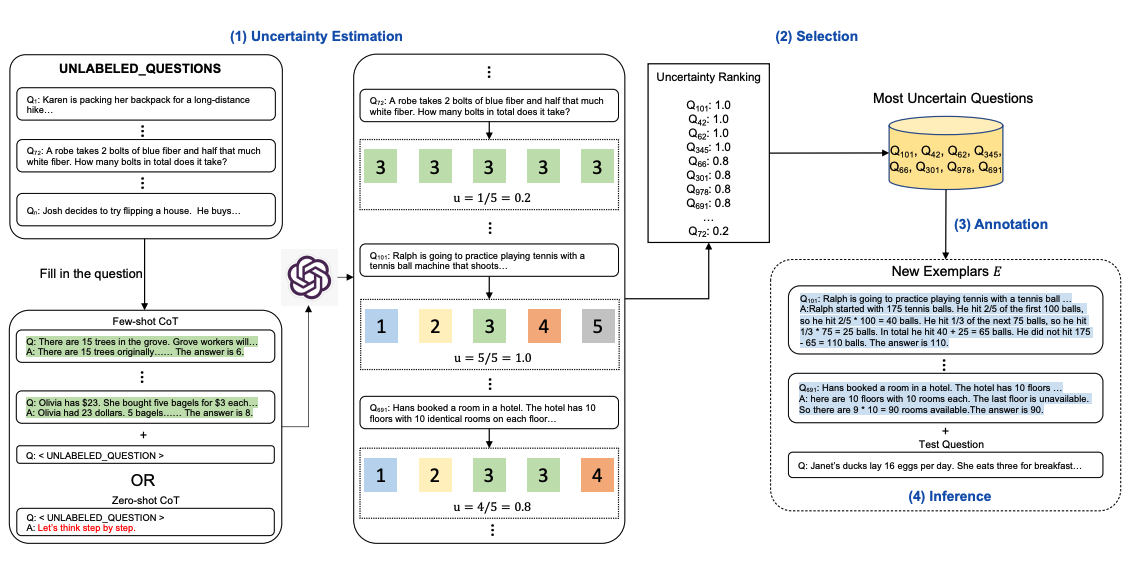

Active-Prompt moves away from "one-size-fits-all" exemplars by identifying the most difficult or "uncertain" questions in a dataset and prioritizing them for human annotation.

Image Source: Diao et al. (2023)

Image Source: Diao et al. (2023)

The 4-Step Process:

- Uncertainty Querying: The LLM is queried with a raw set of training questions (with or without a few initial CoT examples).

- Generation: For each question, the model generates k possible answer candidates.

- Uncertainty Calculation: An uncertainty metric is calculated based on these k answers. Typically, high disagreement between the candidates indicates high uncertainty.

- Selection & Annotation: The most uncertain questions are selected for human annotation (creating high-quality CoT reasoning chains). These new exemplars are then used as the final prompt to infer the remaining questions.

Why it's Effective

By focusing human effort on the questions the model finds most confusing, Active-Prompt ensures that the few-shot examples provided in the prompt are exactly what the model needs to bridge its reasoning gaps. This results in much higher task-specific accuracy compared to random or fixed exemplar selection.

Key Metric: Uncertainty is often measured using the "disagreement" among the generated reasoning paths. If the model produces wildly different results for the same question, it's a strong candidate for human-guided correction.

[!TIP] Active-Prompt is an excellent strategy for high-stakes enterprise applications where you want to maximize accuracy while minimizing the cost of manual human annotation.