++++

++++Why do a few words appear everywhere while most words barely appear at all? Discover the power law that governs all human communication.

Zipf's Law: The Mathematics of Language 📈

Zipf's Law: The Mathematics of Language 📈

If you take any large body of text and rank the words by frequency, a strange pattern emerges: the most frequent word appears about twice as often as the second most frequent, three times as often as the third, and so on. This is Zipf's Law.

This content is adapted from A deep understanding of AI language model mechanisms. It has been curated and organized for educational purposes on this portfolio. No copyright infringement is intended.

1. The Power Law in Action

Zipf's Law is a "Power Law" distribution. In simple terms: Language is highly unequal. A tiny fraction of tokens (like "the", "a", "is") do most of the heavy lifting, while the "Long Tail" contains millions of rare words that the model might only see once in its entire training run.

2. Analyzing Literature 📚

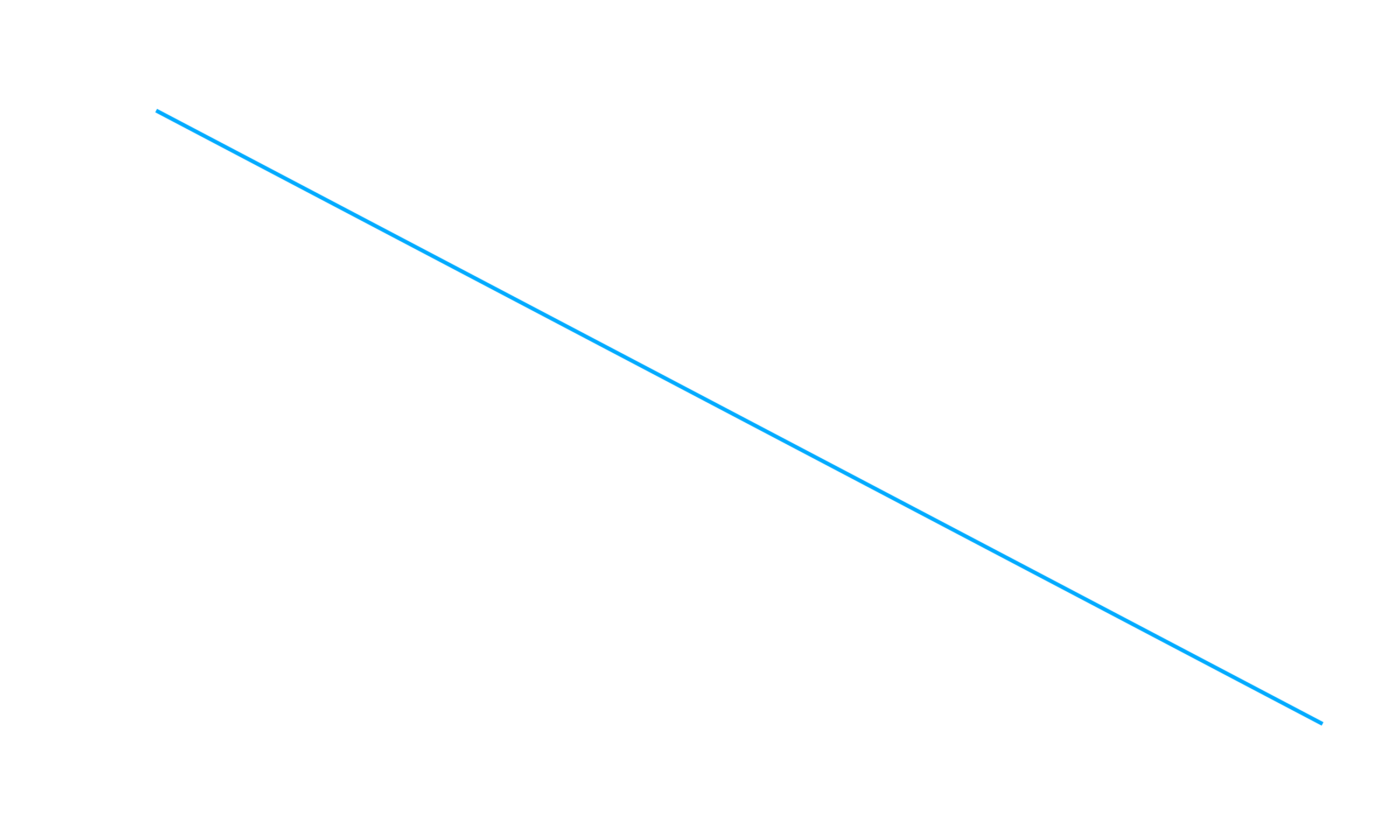

Let's test this law across 10 classic books from Project Gutenberg. We'll count the frequency of every unique token and plot them on a Log-Log scale. If Zipf's Law holds, the result should be a straight line.

import numpy as np

import matplotlib.pyplot as plt

import tiktoken

import requests

tokenizer = tiktoken.get_encoding('cl100k_base')

book_code = '84' # Frankenstein

text = requests.get(f'https://www.gutenberg.org/cache/epub/{book_code}/pg{book_code}.txt').text

# Get token frequencies

tokens = tokenizer.encode(text)

ids, counts = np.unique(tokens, return_counts=True)

# Sort by frequency (descending)

sorted_counts = np.sort(counts)[::-1]

# Plot

plt.figure(figsize=(8, 5))

plt.plot(sorted_counts, '.')

plt.xscale('log')

plt.yscale('log')

plt.title("Zipf's Law in 'Frankenstein'")

plt.xlabel('Token Rank (Log)')

plt.ylabel('Token Frequency (Log)')

plt.grid(True, alpha=0.3)

plt.show()

3. Results: Characters vs. Tokens

When we compare raw characters to BPE tokens, we see two different types of curves:

- Characters: The curve is very "steep" then flat. There are only ~100 characters in English, so the "Long Tail" is short.

- Tokens: The curve is a long, beautiful straight line spanning orders of magnitude. This shows that the 100,000+ tokens in GPT-4's vocabulary perfectly follow Zipf's Law.

The 80/20 Rule: In most texts, the top 100-200 tokens account for over 50% of the total volume. This is why BPE is so effective—by optimizing for those top tokens, we compress the majority of all human text.

4. Why This Matters for AI

- Vocabulary Selection: When building a tokenizer, we use Zipf's Law to decide which subwords are worth keeping. If a subword is too rare (in the deep long tail), it's not worth the space in the model's embedding matrix.

- Training Data: Models struggle with "Low-Resource" languages because those languages don't have enough data to fill out the frequency curve, leaving the "Long Tail" empty and the model's understanding shallow.

💡 Summary

Zipf's Law is the "Gravity" of linguistics. It dictates how we compress text, how we train models, and why some words are infinitely more "valuable" to a tokenizer than others.

In our final lesson of this section, we'll wrap up everything we've learned about Tokens and Numbers!